NVIDIA and Google Cloud Integrate Blackwell GPUs and NIM Microservices for Agentic AI Development

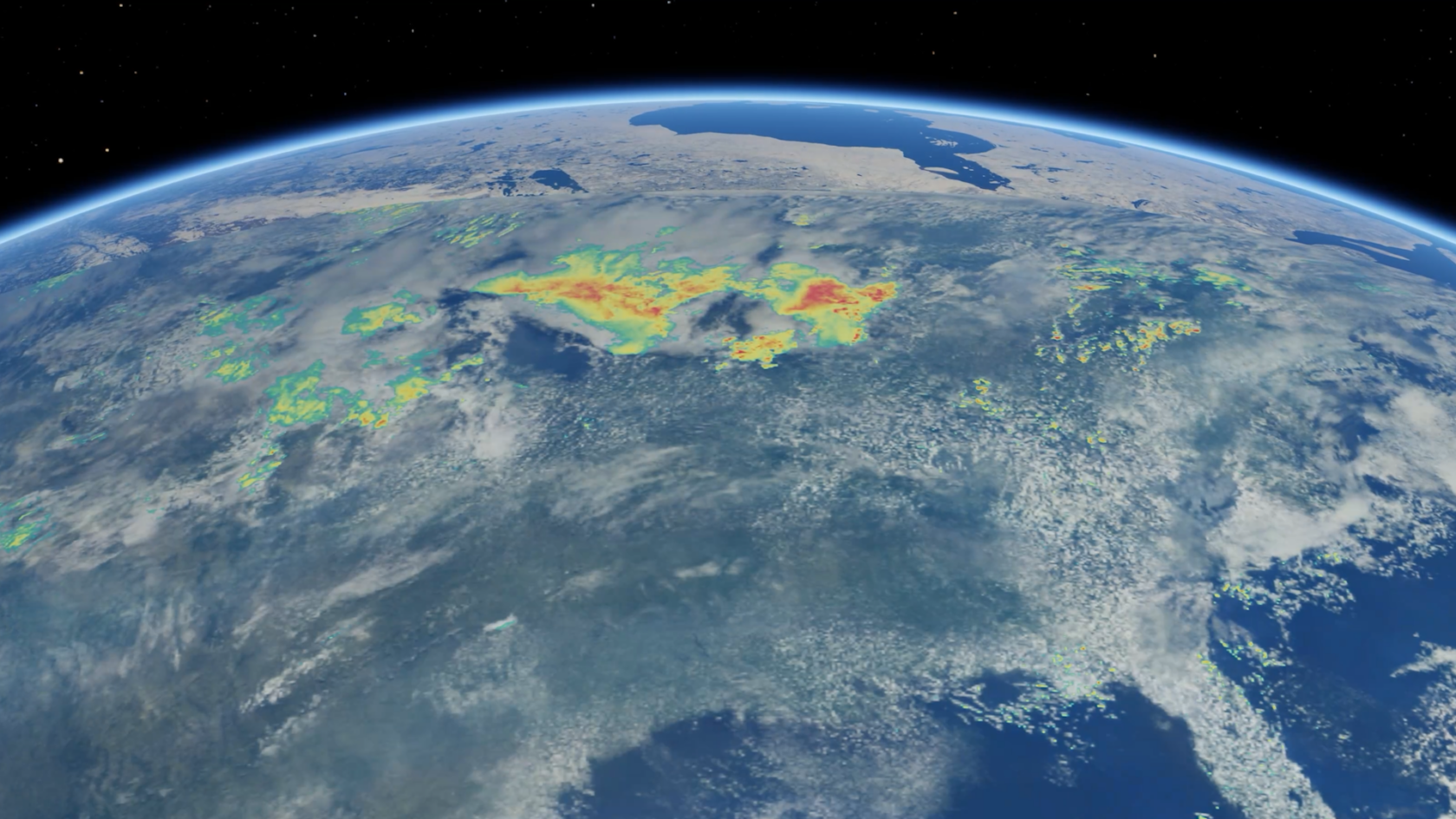

NVIDIA and Google Cloud are accelerating the development of agentic AI by making NVIDIA NIM microservices available on the Google Cloud Marketplace. This integration allows developers to deploy pre-optimized AI models directly within Vertex AI, streamlining the creation of autonomous agents that can reason and execute complex tasks. By providing a unified software stack, the partnership reduces the operational overhead typically associated with self-managing model inference and deployment cycles. The collaboration also introduces high-performance hardware options, including the upcoming NVIDIA Blackwell platform on Google Cloud. These instances are designed to handle massive large language models and generative AI workloads, providing the compute power necessary for real-time physical AI simulations and large-scale industrial digital twins. This hardware advancement is paired with improved networking capabilities to support distributed training across massive clusters. For physical AI and robotics, NVIDIA is bringing Omniverse and Earth-2 capabilities to Google Cloud infrastructure. These tools enable developers to simulate complex physical environments and climate patterns, facilitating the training of robot controllers and the development of high-fidelity environmental models for various industrial sectors. This shift allows for more accurate digital representations of physical assets and processes within the cloud environment. Cloud architects and developers should evaluate the new deployment paths within the Vertex AI Model Garden. The availability of sovereign AI solutions and regional hardware allocations may affect how teams architect their global infrastructure, particularly for organizations with strict data residency and low-latency requirements. Monitoring current usage and reviewing the updated pricing models for Blackwell-based instances is recommended for future capacity planning.

Comparison

| Aspect | Before / Alternative | After / This |

|---|---|---|

| AI Deployment Model | Manual containerization and manual model optimization | NVIDIA NIM microservices integrated via Vertex AI |

| Compute Architecture | Standard H100 and L4 GPU instances | Next-generation Blackwell platform (B200) support |

| Physical Simulation | Limited cloud-native high-fidelity physics | NVIDIA Omniverse and Earth-2 integration on GCP |

| Developer Workflow | Fragmented toolchains for building AI agents | Unified Vertex AI and NIM orchestration environment |

Action Checklist

- Access NVIDIA NIM on Google Cloud Marketplace Verify pre-built inference containers for your specific model architecture.

- Review Vertex AI Model Garden updates Check for new Blackwell-optimized model weights and configurations.

- Evaluate regional availability for Blackwell instances Availability may vary by region during the initial rollout phase.

- Update IAM roles and service accounts Ensure proper permissions for NIM microservice deployments within GCP.

- Integrate Omniverse APIs for digital twins Only necessary if your workflow requires physical AI or robotics simulation.

Source: NVIDIA

This page summarizes the original source. Check the source for full details.